Source: https://xkcd.com/3049/

Source: https://xkcd.com/3049/

I’m doing something that I should have done a long time ago: collect past series of posts into a single, easy-to-reference post. The following posts formed my series on computing square roots and logarithms without a calculator (with the latest post added).

Part 1: Method #1: Trial and error.

Part 2: Method #2: An algorithm comparable to long division.

Part 3: Method #3: Introduction to logarithmic tables.

Part 4: Finding antilogarithms with a table.

Part 5: Pedagogical and historical thoughts on log tables.

Part 6: Computation of square roots using a log table.

Part 7: Method #4: Slide rules

Part 8: Method #5: By hand, using a couple of known logarithms base 10, the change of base formula, and the Taylor approximation .

Part 9: An in-class activity for getting students comfortable with logarithms when seen for the first time.

Part 10: Method #6: Mentally… anecdotes from Nobel Prize-winning physicist Richard P. Feynman and me.

Part 11: Method #7: Newton’s Method.

Part 12: Method #8: The formula

Source: https://xkcd.com/2711/

In my capstone class for future secondary math teachers, I ask my students to come up with ideas for engaging their students with different topics in the secondary mathematics curriculum. In other words, the point of the assignment was not to devise a full-blown lesson plan on this topic. Instead, I asked my students to think about three different ways of getting their students interested in the topic in the first place.

I plan to share some of the best of these ideas on this blog (after asking my students’ permission, of course).

This student submission comes from my former student Jonathan Chen. His topic, from Precalculus: computing logarithms with base 10.

What interesting (i.e., uncontrived) word problems using this topic can your students do now?

Computing logarithms with base 10 can appear in many scientific applications for word problems. To define the acidity or alkalinity of a substance, Chemists use the formula . “[H+] is the hydrogen ion concentration that is measured in moles per liter” (Stapel, n.d.). We know lemon juice is acidic because the pH value is less than 7. We know bleach is basic because the pH value is greater than 7. When a pH value is equal to 7, the solution is neutral. An example of something neutral would be pure water. Teacher can create word problems based on the information given about a liquid solution. Noise can be measured in decibels. The formula used to measure the strength of a sound is

. “I0 is the intensity of ‘threshold sound,’ or sound that can be barely be perceived” (Stapel, n.d.). Teachers can create word problems based on the defined terms of how many times more intense a sound is than the threshold sound. Similar problems with the topic of computing logarithms can be made involving earthquake intensity.

How can this topic be used in your students’ future courses in mathematics or science?

As shown in the above answer, this topic can reappear in student’s future science course in the topic of pH levels, earthquake intensity, or “loudness” measured in decibels. In order to find the pH levels, [H+] concentration, or the [OH–] concentration you may need to know how to calculate logarithms with base 10 when dealing with the equation . Similar things can be said about measuring “loudness” and earthquake intensity. Their formulas involve calculating logarithms with base 10. Other future topics students may encounter in mathematics are logarithmic functions, Euler’s number, natural log, and logarithm rules. While not all of these future topics are strongly related to the topic of calculating logarithms with base 10, they can be loosely connected to where the practice of calculating logarithms with base 10 makes it easier to understand and do things related to the future topics. With the topic of logarithmic rules, it can help better simply and calculate with logarithms with base 10.

How was this topic adopted by the mathematical community?

Calculating logarithms with base 10 has been around since 1614. John Napier invented logarithms and ever since then small additions have been made. Additions such as a logarithmic table made it easier to solve logarithmic problems. The logarithmic tables are similar to the multiplication tables elementary schoolers memorize to calculate simple multiplication faster for their future problems. Many mathematicians made their contributions to add more to the logarithmic table to the point where the calculations reached up to 200,000. Aside from the logarithmic tables, there were other methods to calculate logarithms with base 10 such as the slide rule. It was also possible to memorize the values of the logs with base 10 of 1 through 10 and use the logarithmic rules to calculate bigger values. Because

by expansion and logarithmic rules, people can solve this problem my memorizing that and knowing that

. Knowing this makes the equation more clear to recognize and easier to solve by hand. Calculating logarithms with base 10 were used extensively until the creation of the calculator made it easier to calculate anything, including logarithms.

References

“The Log Log Duplex Trig” “Slide Rule”. (n.d.). Retrieved from Web Archive: https://web.archive.org/web/20090214020502/http://www.mccoys-kecatalogs.com/K%26EManuals/4081-3_1943/4081-3_1943.htm

Bourne, M. (n.d.). 4. Logarithms to Base 10. Retrieved from Interactive Mathematics: https://www.intmath.com/exponential-logarithmic-functions/4-logs-base-10.php

Calculating Base 10 Logarithms in your Head. (n.d.). Retrieved from Nerd Paradise: https://nerdparadise.com/math/base10logs

John Napier and the invention of logarithms, 1614; a lecture. (n.d.). Retrieved from Archive.org: https://archive.org/details/johnnapierinvent00hobsiala/page/18/mode/2up

Stapel, E. (n.d.). Logarithmic Word Problems. Retrieved from Purple Math: https://www.purplemath.com/modules/expoprob.htm

In my capstone class for future secondary math teachers, I ask my students to come up with ideas for engaging their students with different topics in the secondary mathematics curriculum. In other words, the point of the assignment was not to devise a full-blown lesson plan on this topic. Instead, I asked my students to think about three different ways of getting their students interested in the topic in the first place.

I plan to share some of the best of these ideas on this blog (after asking my students’ permission, of course).

This student submission comes from my former student Andrew Sansom. His topic, from Precalculus: computing logarithms with base 10.

D1. How can technology (YouTube, Khan Academy [khanacademy.org], Vi Hart, Geometers Sketchpad, graphing calculators, etc.) be used to effectively engage students with this topic?

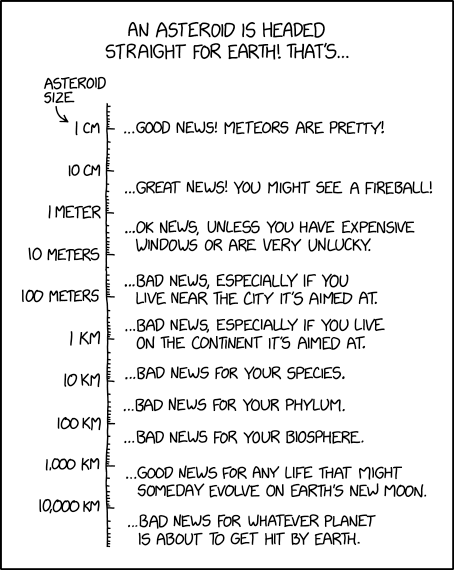

The slide rule was originally invented around 1620, shortly after Napier invented the logarithm. In its simplest form, it uses two logarithmic scales that slide past each other, allowing one to multiply and divide numbers easily. If the scales were linear, aligning them would add two numbers together, but the logarithmic scale turns this into a multiplication problem. For example, the below configuration represents the problem: .

Because of log rules, the above problem can be represented as:

The C-scale is aligned against the 14 on the D-scale. The reticule is then translated so that it is over the 18 on the C-scale. The sum of the log of these two values is the log of their product.

Most modern students have never seen a slide rule before, and those that have heard of one probably know little about it other than the cliché “we put men on the moon using slide rules!” Consequently, there these are quite novel for students. A particularly fun, engaging activity to demonstrate to students the power of logarithms would be to challenge volunteers to a race. The student must multiply two three-digit numbers on the board, while the teacher uses a slide rule to do the same computation. Doubtless, a proficient slide rule user will win every time. This activity can be done briefly but will energize the students and show them that there may be something more to this “whole logarithm idea” instead of some abstract thing they’ll never see again.

How can this topic be used in your students’ future courses in mathematics or science?

Computing logarithms with base 10, especially with using logarithm properties, easily leads to learning to compute logarithms in other bases. This generalizes further to logarithmic functions, which are one of the concepts from precalculus most useful in calculus. Integrals with rational functions usually become problems involving logarithms and log properties. Without mastery of the aforementioned rudimentary skills, the student is quickly doomed to be unable to handle those problems. Many limits, including the limit definition of e, Euler’s number, cannot be evaluated without logarithms.

Outside of pure math classes, the decibel is a common unit of measurement in quantities that logarithmic scales with base 10. It is particularly relevant in acoustics and circuit analysis, both topics in physics classes. In chemistry, the pH of a solution is defined as the negative base-ten logarithm of the concentration of hydrogen ions in that solution. Acidity is a crucially important topic in high school chemistry.

A1. What interesting (i.e., uncontrived) word problems using this topic can your students do now?

Many word problems could be easily constructed involving computations of logarithms of base 10. Below is a problem involving earthquakes and the Richter scale. It would not be difficult to make similar problems involving the volume of sounds, the signal to noise ratio of signals in circuits, or the acidity of a solution.

The Richter Scale is used to measure the strength of earthquakes. It is defined as

where is the magnitude,

is the intensity of the quake, and

is the intensity of a “standard quake”. In 1965, an earthquake with magnitude 8.7 was recorded on the Rat Islands in Alaska. If another earthquake was recorded in Asia that was half as intense as the Rat Islands Quake, what would its magnitude be?

Solution:

First, substitute our known quantity into the equation.

Next, solve for the intensity of the Rat Island quake.

Now, substitute the intensity of the new quake into the original equation.

Thus, the new quake has magnitude 8.393 on the Richter scale.

References:

Earthquake data from Wikipedia’s List of Earthquakes (https://en.wikipedia.org/wiki/Lists_of_earthquakes#Largest_earthquakes_by_magnitude)

Slide rule picture is a screenshot of Derek Ross’s Virtual Slide Rule (http://www.antiquark.com/sliderule/sim/n909es/virtual-n909-es.html)

I’m doing something that I should have done a long time ago: collecting a series of posts into one single post. The following links comprised my series on the decimal expansions of logarithms.

Part 1: Pedagogical motivation: how can students develop a better understanding for the apparently random jumble of digits in irrational logarithms?

Part 2: Idea: use large powers.

Part 3: Further idea: use very large powers.

Part 4: Connect to continued fractions and convergents.

Part 5: Tips for students to find these very large powers.

While some common (i.e., base-10) logarithms work out evenly, like , most do not. Here is the typical output when a scientific calculator computes a logarithm:

In today’s post, I’ll summarize the past few posts to describe how talented Algebra II students, who have just been introduced to logarithms, can develop proficiency with the Laws of Logarithms while also understanding that the above answer is not just a meaningless jumble of digits. The only tools students will need are

To estimate , Algebra II students can try to find a power of 5.1264 that is close to a power of 10. In principle, this can be done by just multiplying by

until an answer decently close to

arises. For the teacher who’s guiding students through this exploration, it might be helpful to know the answer ahead of time.

One way to do this is to use Wolfram Alpha to find the convergents of . If you click this link, you’ll see that I entered

Convergents[Log[10,5.1264],15]

A little explanation is in order:

From Wolfram Alpha, I see that is the last convergent with a numerator less than 100. For the purposes of this exploration, I interpret these fractions as follows:

In other words, the denominator of the convergent gives the exponent for

, while the numerator gives the exponent for the approximated power of 10. Continuing with the Laws of Logarithms,

A quick check with a calculator shows that this approximation is accurate to three decimal places. This alone should convince many students that the above apparently random jumble of digits is not so random after all.

While the above discussion should be enough for many students, some students may want to know how to find the rest of the decimal places with this technique. To answer this question, we again turn to the convergents of from Wolfram Alpha. From this list, we see that

is the first convergent with a denominator at least six digits long. The student therefore has two options:

Option #1. Ask the student to use Wolfram Alpha to raise to the denominator of this convergent. Surprisingly to the student, but not surprisingly to the teacher who knows about this convergent, the answer is very close to a power of 10:

. The student can then use the Laws of Logarithms as before:

,

which matches the output of the calculator.

Option #2. Ask the student to “trick” a hand-held calculator into finding . This option requires the use of the convergent with the largest numerator less than 100, which was

.

In this example, the is of course superfluous, but I include it here to show where the remainder should be placed. Entering this in a calculator yields a result that is close to

. (The teacher should be aware that some of the last few digits may differ from the more precise result given by Wolfram Alpha due to round-off error, but this discrepancy won’t matter for the purposes of the student’s explorations.) In other words,

,

which may be rearranged as

after using the Laws of Exponents. From this point, the derivation follows the steps in Option #1.

While some common (i.e., base-10) logarithms work out evenly, like , most do not. Here is the typical output when a scientific calculator computes a logarithm:

To a student first learning logarithms, the answer is just an apparently random jumble of digits; indeed, it can proven that the answer is irrational. With a little prompting, a teacher can get his/her students wondering about how people 50 years ago could have figured this out without a calculator. This leads to a natural pedagogical question:

Can good Algebra II students, using only the tools at their disposal, understand how decimal expansions of base-10 logarithms could have been found before computers were invented?

Here’s a trial-and-error technique — an exploration activity — that is within the grasp of Algebra II students. It’s simple to understand; it’s just a lot of work. The only tools that are needed are

In the previous post in this series, we found that

and

.

Using the Laws of Logarithms on the latter provides an approximation of that is accurate to an astounding ten decimal places:

.

Compare with:

Since hand-held calculators will generate identical outputs for these two expressions (up to the display capabilities of the calculator), this may lead to the misconception that the irrational number is actually equal to the rational number

, so I’ll emphasize again that these two numbers are not equal but are instead really, really close to each other.

We now turn to a question that was deferred in the previous post.

Student: How did you know to raise 3 to the 323,641st power?

Teacher: I just multiplied 3 by itself a few hundred thousand times.

Student: C’mon, really. How did you know?

While I don’t doubt that some of our ancestors used this technique to find logarithms — at least before the discovery of calculus — today’s students are not going to be that patient. Instead, to find suitable powers quickly, we will use ideas from the mathematical theory of continued fractions: see Wikipedia, Mathworld, or this excellent self-contained book for more details.

To approximate , the technique outlined in this series suggests finding integers

and

so that

,

or, equivalently,

.

In other words, we’re looking for rational numbers that are reasonable close to . Terrific candidates for such rational numbers are the convergents to the continued fraction expansion of

. I’ll defer to the references above for how these convergents can be computed, so let me cut to the chase. One way these can be quickly obtained is the free website Wolfram Alpha. For example, the first few convergents of

are

.

A larger convergent is , our familiar friend from the previous post in this series.

As more terms are taken, these convergents get closer and closer to . In fact:

So convergents provide a way for teachers to maintain the illusion that they found a power like by laborious calculation, when in fact they were quickly found through modern computing.

While some common (i.e., base-10) logarithms work out evenly, like , most do not. Here is the typical output when a scientific calculator computes a logarithm:

To a student first learning logarithms, the answer is just an apparently random jumble of digits; indeed, it can proven that the answer is irrational. With a little prompting, a teacher can get his/her students wondering about how people 50 years ago could have figured this out without a calculator. This leads to a natural pedagogical question:

Can good Algebra II students, using only the tools at their disposal, understand how decimal expansions of base-10 logarithms could have been found before computers were invented?

Here’s a trial-and-error technique — an exploration activity — that is within the grasp of Algebra II students. It’s simple to understand; it’s just a lot of work.

To approximate , look for integer powers of

that are close to powers of 10.

In the previous post in this series, we essentially used trial and error to find such powers of 3. We found

,

from which we can conclude

.

This approximation is accurate to five decimal places.

By now, I’d imagine that our student would be convinced that logarithms aren’t just a random jumble of digits… there’s a process (albeit a complicated process) for obtaining these decimal expansions. Of course, this process isn’t the best process, but it works and it only uses techniques at the level of an Algebra II student who’s learning about logarithms for the first time.

If nothing else, hopefully this lesson will give students a little more appreciation for their ancestors who had to perform these kinds of calculations without the benefit of modern computing.

We also saw in the previous post that larger powers can result in better and better approximation. Finding suitable powers gets harder and harder as the exponent gets larger. However, when a better approximation is found, the improvement can be dramatic. Indeed, the decimal expansion of a logarithm can be obtained up to the accuracy of a hand-held calculator with a little patience. For example, let’s compute

Predictably, the complaint will arise: “How did you know to try ?” The flippant and awe-inspiring answer is, “I just kept multiplying by 3.”

I’ll give the real answer that question later in this series.

Postponing the answer to that question for now, there are a couple ways for students to compute this using readily available technology. Perhaps the most user-friendly is the free resource Wolfram Alpha:

.

That said, students can also perform this computation by creatively using their handheld calculators. Most calculators will return an overflow error if a direct computation of is attempted; the number is simply too big. A way around this is by using the above approximation

, so that

. Therefore, we can take large powers of

without worrying about an overflow error.

In particular, let’s divide by $153$. A little work shows that

,

or

.

This suggests that we try to compute

,

and a hand-held calculator can be used to show that this expression is approximately equal to . Some of the last few digits will be incorrect because of unavoidable round-off errors, but the approximation of

— all that’s needed for the present exercise — will still be evident.

By the Laws of Exponents, we see that

.

![]() Whichever technique is used, we can now use the Laws of Logarithms to approximate

Whichever technique is used, we can now use the Laws of Logarithms to approximate :

.

This approximation matches the decimal expansion of to an astounding ten decimal places:

Since hand-held calculators will generate identical outputs for these two expressions (up to the display capabilities of the calculator), this may lead to the misconception that the irrational number is actually equal to the rational number

, so I’ll emphasize again that these two numbers are not equal but are instead really, really close to each other.

Summarizing, Algebra II students can find the decimal expansion of can be found up to the accuracy of a hand-held scientific calculator. The only tools that are needed are

While I don’t have a specific reference, I’d be stunned if none of our ancestors tried something along these lines in the years between the discovery of logarithms (1614) and calculus (1666 or 1684).