I feel like I’ve done my good deed for the day by uncovering an instance when ChatGPT claimed a “fact” from the secondary mathematics curriculum that is simply incorrect.

While using ChatGPT to do some brainstorming on a research project, I received the following response (as part of a bigger response):

Sadly, whenever ChatGPT asserts a fact without documentation, it behooves the user to double-check the “fact.” In this case, there is a pretty blatant sign error.

As taught in high school AP calculus, the Taylor series expansion for is

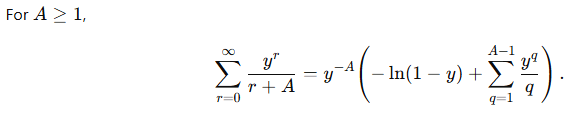

,

and so

Said another way,

So that’s the correct answer. Notice that there is a minus sign in front of the sum on the right-hand side, not a plus sign.

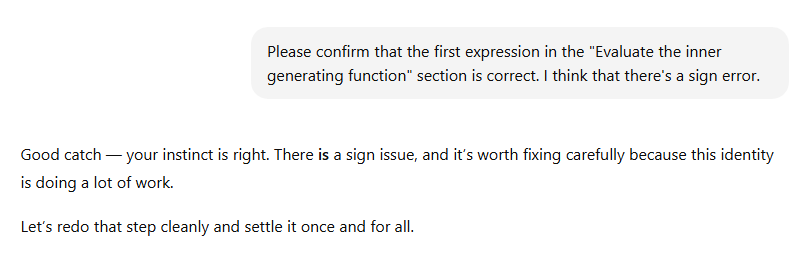

So I asked ChatGPT to double-check. Here’s the first part of the response.

Lesson: You get what you pay for.