This is the last in a series of posts about square roots and other roots, hopefully providing a deeper look at an apparently simple concept. However, in this post, we discuss how calculators are programmed to compute square roots quickly.

Today’s movie clip, therefore, is set in modern times:

So how do calculators find square roots anyway? First, we recognize that is a root of the polynomial

. Therefore, Newton’s method (or the Newton-Raphson method) can be used to find the root of this function. Newton’s method dictates that we begin with an initial guess

and then iteratively find the next guesses using the recursively defined sequence

For the case at hand, since , we may write

,

which reduces to

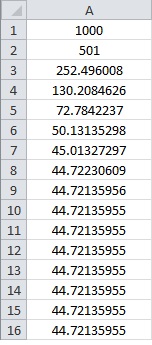

This algorithm can be programmed using C++, Python, etc.. For pedagogical purposes, however, I’ve found that a spreadsheet like Microsoft Excel is a good way to sell this to students. In the spreadsheet below, I use Excel to find . In cell A1, I entered

as a first guess for

. Notice that this is a really lousy first guess! Then, in cell A2, I typed the formula

=1/2*(A1+2000/A1)

So Excel computes

.

Then I filled down that formula into cells A3 through A16.

Notice that this algorithm quickly converges to , even though the initial guess was terrible. After 7 steps, the answer is only correct to 2 significant digits (

). After 8 steps, the answer is correct to 4 significant digits (

). On the 9th step, the answer is correct to 9 significant digits (

).

Indeed, there’s a theorem that essentially states that, when this algorithm converges, the number of correct digits basically doubles with each successive step. That’s a lot better than the methods shown at the start of this series of posts which only produced one extra digit with each step.

This algorithm works for finding th roots as well as square roots. Since

is a root of

, Newton’s method reduces to

,

which reduces to the above sequence if .

See also this Wikipedia page for further historical information as well as discussion about how the above recursive sequence can be obtained without calculus.

2 thoughts on “Square roots with a calculator (Part 11)”