This post is inspired by one of the questions that I pose to our future high school math teachers during our Friday question-and-answer sessions. In these sessions, I play the role of a middle- or high-school student who’s asking a tricky question to his math teacher. Here’s the question:

A student asks, “My father bought a house for $200,000 at 12% interest. He told me that by the time he fi nishes paying for the house, it will have cost him more than $500,000. How is that possible? 12% of $200,000 is only $24,000.”

Without fail, these future teachers don’t have a good response to this question. Indeed, my experience is that most young adults (including college students) have never used an amortization table, which is the subject of today’s post.

In the past few posts, we have considered the solution of the following recurrence relation, which is often used to model the payment of a mortgage or of credit-card debt:

With this difference equation, the rate at which the principal is reduced can be simply computed using Microsoft Excel. This tool is called an amortization schedule or an amortization table; see E-How for the instructions of how to build one. Here’s a sample Excel spreadsheet that I’ll be illustrating below: Amortization schedule. My personal experience is that many math majors have never seen such a spreadsheet, even though they are familiar with compound interest problems and certainly have the mathematical tools to understand this spreadsheet.

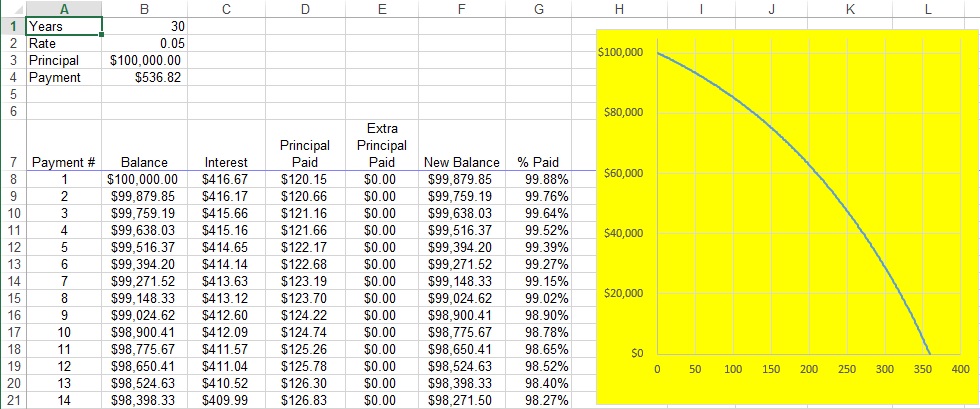

Here’s a screen capture from the spreadsheet:

The terms of the loan are typed into Cells B1 (length of loan, in years), B2 (annual percentage rate), and B3 (initial principal). Cell B4 is computed from this information using the Microsoft Excel command :

This is the amount that must be paid every month in order to pay off the loan in the prescribed number of years. Of course, there is a formula for this:

I won’t go into the derivation of this formula here, as it’s a bit complicated. Notice that this formula does not include escrow, points, closing costs, etc. This is strictly the amount of money that’s needed to pay down the principal.

The table, beginning in Row 8 of the above picture, shows how quickly the principal will be paid off. In row 8, the interest that’s paid for that month is computed by

Therefore, the amount of the monthly payment that actually goes toward paying down the principal will be

Column E provides an opportunity to pay something extra each month; more on this later. So, after taking into account the payments in columns D and E, the amount remaining on the loan is recorded in Cell F8:

This amount is then copied into Cell B9, and then the pattern can be filled down.

The yellow graph shows how quickly the balance of the loan is paid off over the length of the loan. A picture is worth a thousand words: in the initial years of the loan, most of the payments are gobbled up by the interest, and so the principal is paid off slowly. Only in the latter years of the loan is the principal paid off quickly.

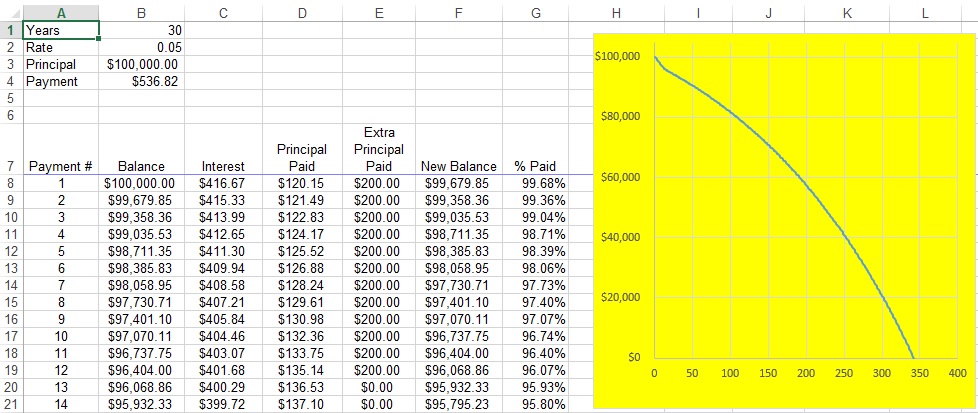

So, it stands to reason that any extra payments in the initial months and years of the loan can do wonders for paying off the loan quickly. For example, here’s a screenshot of what happens if an extra $200/month is paid only in the first 12 months of the loan:

A definite bend in the graph is evident in the initial 12 months until the normal payment is resumed in month 13. As a result of those extra payments, the curve now intersects the horizontal axis around 340. In other words, 20 fewer months are required to pay off the loan. Stated another way, the extra payments in the first year cost an extra

A definite bend in the graph is evident in the initial 12 months until the normal payment is resumed in month 13. As a result of those extra payments, the curve now intersects the horizontal axis around 340. In other words, 20 fewer months are required to pay off the loan. Stated another way, the extra payments in the first year cost an extra . However, in the long run, those payments saved about

!