In recent posts, we’ve seen the curious phenomenon that the commutative and associative laws do not apply to a conditionally convergent series or infinite product: while

,

a rearranged series can be something completely different:

.

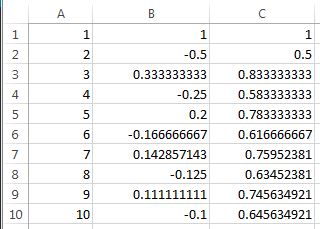

This very counterintuitive result can be confirmed using commonly used technology — in particular, Microsoft Excel. In the spreadsheet below, I typed:

- =IF(MOD(ROW(A1),3)=0,ROW(A1)*2/3,IF(MOD(ROW(A1),3)=1,4*(ROW(A1)-1)/3+1,4*(ROW(A1)-2)/3+3)) in cell A1

- =POWER(-1,A1-1)/A1 in cell B1

- =B1 in cell C1

- I copied cell A1 into cell A2

- =POWER(-1,A2-1)/A2 in cell B2

- =C1+B2 in cell C2

The unusual command for cell A1 was necessary to get the correct rearrangement of the series.

Then I used the FILL DOWN command to fill in the remaining rows. Using these commands cell C9 shows the sum of all the entries in cells B1 through B9, so that

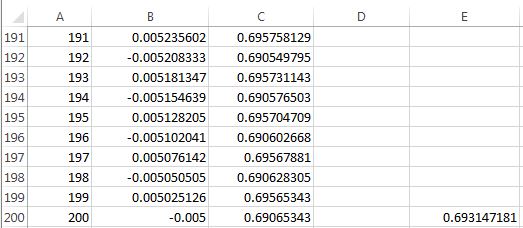

Filling down to additional rows demonstrates that the sum converges to and not to

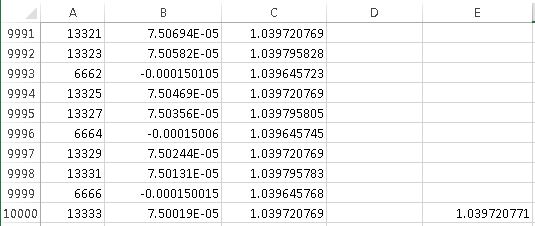

. Here’s the sum up to 10,000 terms… the entry in column E is the first few digits in the decimal expansion of

.

Clearly the partial sums are not approaching , and there’s good visual evidence to think that the answer is

instead. (Incidentally, the 10,000th partial sum is very close to the limiting value because

is one more than a multiple of 3.)