Here’s an explanation for why  is undefined that should be within the grasp of pre-algebra students:

is undefined that should be within the grasp of pre-algebra students:

Part 1.

- What is

? Of course, it’s

? Of course, it’s  .

.

- What is

? Again,

? Again,  .

.

- What is

? Again,

? Again,  .

.

- What is

, or

, or  ? Again,

? Again,  .

.

- What is

, or

, or ![\sqrt[3]{0}](https://s0.wp.com/latex.php?latex=%5Csqrt%5B3%5D%7B0%7D&bg=ffffff&fg=000000&s=0&c=20201002) ? In other words, what number, when cubed, is

? In other words, what number, when cubed, is  ? Again,

? Again,  .

.

- What is

, or

, or ![\sqrt[10]{0}](https://s0.wp.com/latex.php?latex=%5Csqrt%5B10%5D%7B0%7D&bg=ffffff&fg=000000&s=0&c=20201002) ? In other words, what number, when raised to the 10th power, is

? In other words, what number, when raised to the 10th power, is  . Again,

. Again,  .

.

So as the exponent gets closer to  , the answer remains

, the answer remains  . So, from this perspective, it looks like

. So, from this perspective, it looks like  ought to be equal to

ought to be equal to  .

.

Part 2.

- What is

. Of course, it’s

. Of course, it’s  .

.

- What is

. Again,

. Again,  .

.

- What is

. Again,

. Again,  .

.

- What is

? Again,

? Again,

- What is

. Again,

. Again,

- What is

? Again,

? Again,

So as the base gets closer to  , the answer remains

, the answer remains  . So, from this perspective, it looks like

. So, from this perspective, it looks like  ought to be equal to

ought to be equal to  .

.

In conclusion: looking at it one way,  should be defined to be

should be defined to be  . From another perspective,

. From another perspective,  should be defined to be

should be defined to be  .

.

Of course, we can’t define a number to be two different things! So we’ll just say that  is undefined — just like dividing by

is undefined — just like dividing by  is undefined — rather than pretend that

is undefined — rather than pretend that  switches between two different values.

switches between two different values.

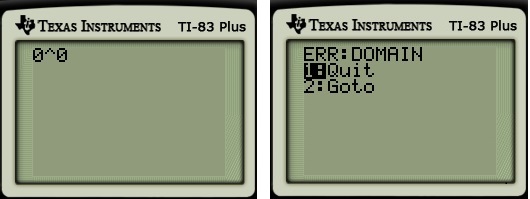

Here’s a more technical explanation about why  is an indeterminate form, using calculus.

is an indeterminate form, using calculus.

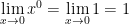

Part 1. As before,

.

.

The first equality is true because, inside of the limit,  is permitted to get close to

is permitted to get close to  but cannot actually equal

but cannot actually equal  , and there’s no ambiguity about

, and there’s no ambiguity about  if

if  . (Naturally,

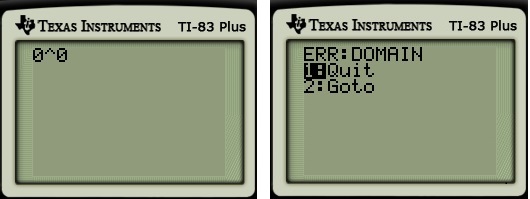

. (Naturally,  is undefined if

is undefined if  .)

.)

The second equality is true because the limit of a constant is the constant.

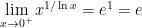

Part 2. As before,

.

.

Once again, the first equality is true because, inside of the limit,  is permitted to get close to

is permitted to get close to  but cannot actually equal

but cannot actually equal  , and there’s no ambiguity about

, and there’s no ambiguity about  if

if  .

.

As before, the answers from Parts 1 and 2 are different. But wait, there’s more…

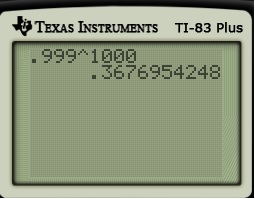

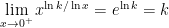

Part 3. Here’s another way that  can be considered, just to give us a headache. Let’s evaluate

can be considered, just to give us a headache. Let’s evaluate

Clearly, the base tends to  as

as  . Also,

. Also,  as

as  , so that

, so that  as

as  . In other words, this limit has the indeterminate form

. In other words, this limit has the indeterminate form  .

.

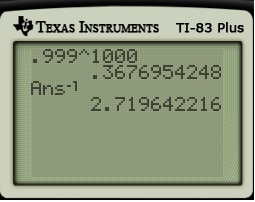

To evaluate this limit, let’s take a logarithm under the limit:

Therefore, without the extra logarithm,

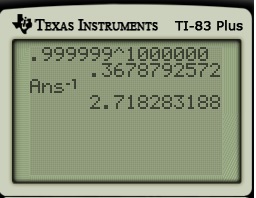

Part 4. It gets even better. Let  be any positive real number. By the same logic as above,

be any positive real number. By the same logic as above,

So, for any  , we can find a function

, we can find a function  of the indeterminate form

of the indeterminate form  so that

so that  .

.

In other words, we could justify defining  to be any nonnegative number. Clearly, it’s better instead to simply say that

to be any nonnegative number. Clearly, it’s better instead to simply say that  is undefined.

is undefined.

P.S. I don’t know if it’s possible to have an indeterminate form of  where the answer is either negative or infinite. I tend to doubt it, but I’m not sure.

where the answer is either negative or infinite. I tend to doubt it, but I’m not sure.

, and so I can differentiate the top and the bottom with respect to

:

.

, then I’ve just shown that

(assuming that the limit exists in the first place, of course). That means that

or

. Well, clearly the limit of this nonnegative function can’t be negative, and so we conclude that the limit is equal to

.

It turns out that

It turns out that