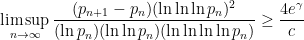

Suppose  is the

is the  th prime number, so that

th prime number, so that  is the size of the

is the size of the  th gap between successive prime numbers. It turns out (Gamma, page 115) that there’s an incredible theorem for the lower bound of this number:

th gap between successive prime numbers. It turns out (Gamma, page 115) that there’s an incredible theorem for the lower bound of this number:

,

,

where  is the Euler-Mascheroni constant and

is the Euler-Mascheroni constant and  is the solution of

is the solution of  .

.

Holy cow, what a formula. Let’s take a look at just a small part of it.

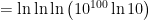

Let’s look at the amazing function  , iterating the natural logarithm function four times. This function has a way of converting really large inputs into unimpressive outputs. For example, the canonical “big number” in popular culture is the googolplex, defined as

, iterating the natural logarithm function four times. This function has a way of converting really large inputs into unimpressive outputs. For example, the canonical “big number” in popular culture is the googolplex, defined as  . Well, it takes some work just to rearrange

. Well, it takes some work just to rearrange  in a form suitable for plugging into a calculator:

in a form suitable for plugging into a calculator:

![= \displaystyle \ln \ln \left[ \ln \left(10^{100} \right) + \ln \ln 10 \right]](https://s0.wp.com/latex.php?latex=%3D+%5Cdisplaystyle+%5Cln+%5Cln+%5Cleft%5B+%5Cln+%5Cleft%2810%5E%7B100%7D+%5Cright%29+%2B+%5Cln+%5Cln+10+%5Cright%5D&bg=ffffff&fg=000000&s=0&c=20201002)

![= \displaystyle \ln \ln \left[ 100 \ln 10 + \ln \ln 10 \right]](https://s0.wp.com/latex.php?latex=%3D+%5Cdisplaystyle+%5Cln+%5Cln+%5Cleft%5B+100+%5Cln+10+%2B+%5Cln+%5Cln+10+%5Cright%5D&bg=ffffff&fg=000000&s=0&c=20201002)

![= \displaystyle \ln \ln \left[ 100 \ln 10 \left( 1 + \frac{\ln \ln 10}{100 \ln 10} \right) \right]](https://s0.wp.com/latex.php?latex=%3D+%5Cdisplaystyle+%5Cln+%5Cln+%5Cleft%5B+100+%5Cln+10+%5Cleft%28+1+%2B+%5Cfrac%7B%5Cln+%5Cln+10%7D%7B100+%5Cln+10%7D+%5Cright%29+%5Cright%5D&bg=ffffff&fg=000000&s=0&c=20201002)

![= \displaystyle \ln \left( \ln [ 100 \ln 10] + \ln \left( 1 + \frac{\ln \ln 10}{100 \ln 10} \right)\right)](https://s0.wp.com/latex.php?latex=%3D+%5Cdisplaystyle+%5Cln+%5Cleft%28+%5Cln+%5B+100+%5Cln+10%5D+%2B+%5Cln+%5Cleft%28+1+%2B+%5Cfrac%7B%5Cln+%5Cln+10%7D%7B100+%5Cln+10%7D+%5Cright%29%5Cright%29&bg=ffffff&fg=000000&s=0&c=20201002)

after using a calculator.

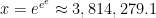

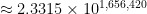

This function grows extremely slowly. What value of  gives an output of

gives an output of  ? Well:

? Well:

What value of  gives an output of

gives an output of  ? Well:

? Well:

That’s a number with 1,656,421 digits! At the rapid rate of 5 digits per second, it would take over 92 hours (nearly 4 days) just to write out the answer by hand!

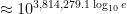

Finally, how large does  have to be for the output to be 2? As we’ve already seen, it’s going to be larger than a googolplex:

have to be for the output to be 2? As we’ve already seen, it’s going to be larger than a googolplex:

![\displaystyle \ln \ln \left[ \ln \left(10^{x} \right) + \ln \ln 10 \right] = 2](https://s0.wp.com/latex.php?latex=%5Cdisplaystyle+%5Cln+%5Cln+%5Cleft%5B+%5Cln+%5Cleft%2810%5E%7Bx%7D+%5Cright%29+%2B+%5Cln+%5Cln+10+%5Cright%5D+%3D+2&bg=ffffff&fg=000000&s=0&c=20201002)

![\displaystyle \ln \ln \left[ x\ln 10 + \ln \ln 10 \right] = 2](https://s0.wp.com/latex.php?latex=%5Cdisplaystyle+%5Cln+%5Cln+%5Cleft%5B+x%5Cln+10+%2B+%5Cln+%5Cln+10+%5Cright%5D+%3D+2&bg=ffffff&fg=000000&s=0&c=20201002)

![\displaystyle \ln \ln \left[ x\ln 10 \left( 1 + \frac{\ln \ln 10}{x\ln 10} \right) \right] = 2](https://s0.wp.com/latex.php?latex=%5Cdisplaystyle+%5Cln+%5Cln+%5Cleft%5B+x%5Cln+10+%5Cleft%28+1+%2B+%5Cfrac%7B%5Cln+%5Cln+10%7D%7Bx%5Cln+10%7D+%5Cright%29+%5Cright%5D+%3D+2&bg=ffffff&fg=000000&s=0&c=20201002)

![\displaystyle \ln \left( \ln [ x\ln 10] + \ln \left( 1 + \frac{\ln \ln 10}{x \ln 10} \right)\right) = 2](https://s0.wp.com/latex.php?latex=%5Cdisplaystyle+%5Cln+%5Cleft%28+%5Cln+%5B+x%5Cln+10%5D+%2B+%5Cln+%5Cleft%28+1+%2B+%5Cfrac%7B%5Cln+%5Cln+10%7D%7Bx+%5Cln+10%7D+%5Cright%29%5Cright%29+%3D+2&bg=ffffff&fg=000000&s=0&c=20201002)

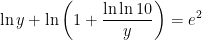

Let’s simplify things slightly by letting  :

:

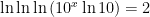

This is a transcendental equation in  ; however, we can estimate that the solution will approximately solve

; however, we can estimate that the solution will approximately solve  since the second term on the left-hand side is small compared to

since the second term on the left-hand side is small compared to  . This gives the approximation

. This gives the approximation  . Using either Newton’s method or else graphing the left-hand side yields the more precise solution

. Using either Newton’s method or else graphing the left-hand side yields the more precise solution  .

.

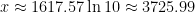

Therefore,  , so that

, so that

.

.

One final note: despite what’s typically taught in high school, mathematicians typically use  to represent natural logarithms (as opposed to base-10 logarithms), so the above formula is more properly written as

to represent natural logarithms (as opposed to base-10 logarithms), so the above formula is more properly written as

.

.

And this sets up a standard joke, also printed in Gamma:

Q: What noise does a drowning analytic number theorist make?

A: Log… log… log… log…

When I researching for my series of posts on conditional convergence, especially examples related to the constant  , the reference Gamma: Exploring Euler’s Constant by Julian Havil kept popping up. Finally, I decided to splurge for the book, expecting a decent popular account of this number. After all, I’m a professional mathematician, and I took a graduate level class in analytic number theory. In short, I don’t expect to learn a whole lot when reading a popular science book other than perhaps some new pedagogical insights.

, the reference Gamma: Exploring Euler’s Constant by Julian Havil kept popping up. Finally, I decided to splurge for the book, expecting a decent popular account of this number. After all, I’m a professional mathematician, and I took a graduate level class in analytic number theory. In short, I don’t expect to learn a whole lot when reading a popular science book other than perhaps some new pedagogical insights.

Boy, was I wrong. As I turned every page, it seemed I hit a new factoid that I had not known before.

In this series, I’d like to compile some of my favorites — while giving the book a very high recommendation.