Numerical integration is a standard topic in first-semester calculus. From time to time, I have received questions from students on various aspects of this topic, including:

- Why is numerical integration necessary in the first place?

- Where do these formulas come from (especially Simpson’s Rule)?

- How can I do all of these formulas quickly?

- Is there a reason why the Midpoint Rule is better than the Trapezoid Rule?

- Is there a reason why both the Midpoint Rule and the Trapezoid Rule converge quadratically?

- Is there a reason why Simpson’s Rule converges like the fourth power of the number of subintervals?

In this series, I hope to answer these questions. While these are standard questions in a introductory college course in numerical analysis, and full and rigorous proofs can be found on Wikipedia and Mathworld, I will approach these questions from the point of view of a bright student who is currently enrolled in calculus and hasn’t yet taken real analysis or numerical analysis.

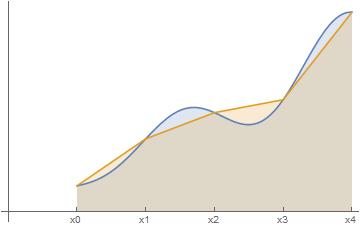

In the previous post in this series, I discussed three different ways of numerically approximating the definite integral , the area under a curve

between

and

.

In this series, we’ll choose equal-sized subintervals of the interval . If

is the width of each subinterval so that

, then the integral may be approximated as

using left endpoints,

using right endpoints, and

using the midpoints of the subintervals.

All three of these approximations were obtained by approximating the above shaded region by rectangles. However, perhaps it might be better to use some other shape besides rectangles. In the Trapezoidal Rule, we approximate the area by using (surprise!) trapezoids, as in the figure below.

The first trapezoid has height and bases

and

, and so the area of the first trapezoid is

. The other areas are found similarly. Adding these together, we get the approximation

Interestingly, is the average of the two endpoint approximations

and

:

.

Of course, as a matter of computation, it’s a lot quicker to directly compute instead of computing

and

separately and then averaging.