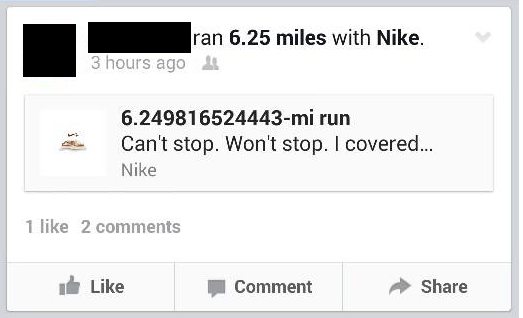

In yesterday’s post, I had a little fun with this claim that the Nike app could measure distance to the nearest trillionth of a mile. The more likely scenario is that the app just reported all of the digits of a double-precision floating point number, whether or not they were significant.

In real life, I’d expect that the first three decimal places are accurate, at most. According to Wikipedia, the official length of a marathon is 42.195 kilometers, but any particular marathon may be off by as many as 42 meters (0.1% of the total distance) to account for slight measurement errors when figuring out a course of that length.

A history about the Jones-Oerth Counter, the devise used to measure the distance of road running courses, can be found at the USA Track and Field website. And my friends who are serious runner swear that the Jones-Oerth Counter is much more accurate than GPS.

The same story often appears in students’ homework. For example:

If a living room is 17 feet long and 14 feet wide, how long is the diagonal distance across the room?

Using the Pythagorean theorem, students will find that the answer is  feet. Then they’ll plug into a calculator and write down the answer on their homework: 22.02271555 feet.

feet. Then they’ll plug into a calculator and write down the answer on their homework: 22.02271555 feet.

This answer, of course, is ridiculous because a standard ruler cannot possibly measure a distance that precisely. The answer follows from the false premise that the numbers 17 and 14 are somehow exact, without absolutely no measurement error. My guess is that at most two decimal places are significant (i.e., the numbers 17 and 14 can be measured accurately to within one-hundredth of a foot, or about one-eighth of an inch).

My experience is that no many students are comfortable with the concept of significant digits (or significant figures), even though this is a standard topic in introductory courses in chemistry and physics. An excellent write-up of the issues can be found here: http://www.angelfire.com/oh/cmulliss/

Other resources:

http://mathworld.wolfram.com/SignificantDigits.html

http://en.wikipedia.org/wiki/Significant_figures

http://en.wikipedia.org/wiki/Significance_arithmetic