The following problem appeared in Volume 53, Issue 4 (2022) of The College Mathematics Journal.

Define, for every non-negative integer

, the

th Catalan number by

.

Consider the sequence of complex polynomials in

defined by

for every non-negative integer

, where

. It is clear that

has degree

and thus has the representation

,

where each

is a positive integer. Prove that

for

.

This problem appeared in the same issue as the probability problem considered in the previous two posts. Looking back, I think that the confidence that I gained by solving that problem gave me the persistence to solve this problem as well.

My first thought when reading this problem was something like “This involves sums, polynomials, and binomial coefficients. And since the sequence is recursively defined, it’s probably going to involve a proof by mathematical induction. I can do this.”

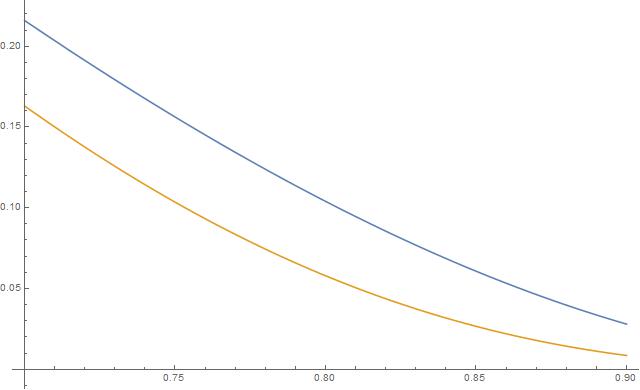

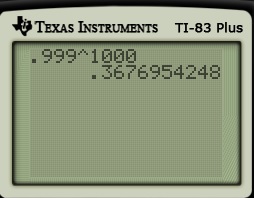

My second thought was to use Mathematica to develop my own intuition and to confirm that the claimed pattern actually worked for the first few values of .

As claimed in the statement of the problem, each is a polynomial of degree

without a nontrivial constant term. Also, for each

, the term of degree

, for

, has a coefficient that is independent of

which equal to

. For example, for

, the coefficient of

(in orange above) is equal to

,

and the problem claims that the coefficient of will remain 14 for

Confident that the pattern actually worked, all that remained was pushing through the proof by induction.

We proceed by induction on . The statement clearly holds for

:

.

Although not necessary, I’ll add for good measure that

and

This next calculation illustrates what’s coming later. In the previous calculation, the coefficient of is found by multiplying out

.

This is accomplished by examining all pairs, one from the left product and one from the right product, so that the exponent works out to be . In this case, it’s

.

For the inductive step, we assume that, for some ,

for all

, and we define

Our goal is to show that for

.

For , the coefficient

of

in

is clearly 1, or

.

For , the coefficient

of

in

can be found by expanding the above square. Every product of the form

will contribute to the term

. Since

(since

), the values of

that will contribute to this term will be

. (Ordinarily, the

and

terms would also contribute; however, there is no

term in the expression being squared). Therefore, after using the induction hypothesis and reindexing, we find

.

The last step used a recursive relationship for the Catalan numbers that I vaguely recalled but absolutely had to look up to complete the proof.