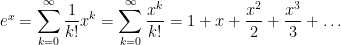

I’m in the middle of a series of posts describing how I remind students about Taylor series. In the previous posts, I described how I lead students to the definition of the Maclaurin series

,

,

which converges to  within some radius of convergence for all functions that commonly appear in the secondary mathematics curriculum.

within some radius of convergence for all functions that commonly appear in the secondary mathematics curriculum.

Step 4. Let’s now get some practice with Maclaurin series. Let’s start with  .

.

What’s  ? That’s easy:

? That’s easy:  .

.

Next, to find  , we first find

, we first find  . What is it? Well, that’s also easy:

. What is it? Well, that’s also easy:  . So

. So  is also equal to

is also equal to  .

.

How about  ? Yep, it’s also

? Yep, it’s also  . In fact, it’s clear that

. In fact, it’s clear that  for all

for all  , though we’ll skip the formal proof by induction.

, though we’ll skip the formal proof by induction.

Plugging into the above formula, we find that

It turns out that the radius of convergence for this power series is  . In other words, the series on the right converges for all values of

. In other words, the series on the right converges for all values of  . So we’ll skip this for review purposes, this can be formally checked by using the Ratio Test.

. So we’ll skip this for review purposes, this can be formally checked by using the Ratio Test.

At this point, students generally feel confident about the mechanics of finding a Taylor series expansion, and that’s a good thing. However, in my experience, their command of Taylor series is still somewhat artificial. They can go through the motions of taking derivatives and finding the Taylor series, but this complicated symbol in  notation still doesn’t have much meaning.

notation still doesn’t have much meaning.

So I shift gears somewhat to discuss the rate of convergence. My hope is to deepen students’ knowledge by getting them to believe that  really can be approximated to high precision with only a few terms. Perhaps not surprisingly, it converges quicker for small values of

really can be approximated to high precision with only a few terms. Perhaps not surprisingly, it converges quicker for small values of  than for big values of

than for big values of  .

.

Pedagogically, I like to use a spreadsheet like Microsoft Excel to demonstrate the rate of convergence. A calculator could be used, but students can see quickly with Excel how quickly (or slowly) the terms get smaller. I usually construct the spreadsheet in class on the fly (the fill down feature is really helpful for doing this quickly), with the end product looking something like this:

In this way, students can immediately see that the Taylor series is accurate to four significant digits by going up to the  term and that about ten or eleven terms are needed to get a figure that is as accurate as the precision of the computer will allow. In other words, for all practical purposes, an infinite number of terms are not necessary.

term and that about ten or eleven terms are needed to get a figure that is as accurate as the precision of the computer will allow. In other words, for all practical purposes, an infinite number of terms are not necessary.

In short, this is how a calculator computes  : adding up the first few terms of a Taylor series. Back in high school, when students hit the

: adding up the first few terms of a Taylor series. Back in high school, when students hit the  button on their calculators, they’ve trusted the result but the mechanics of how the calculator gets the result was shrouded in mystery. No longer.

button on their calculators, they’ve trusted the result but the mechanics of how the calculator gets the result was shrouded in mystery. No longer.

Then I shift gears by trying a larger value of  :

:

I ask my students the obvious question: What went wrong? They’re usually able to volunteer a few ideas:

- The convergence is slower for larger values of

.

.

- The series will converge, but more terms are needed (and I’ll later use the fill down feature to get enough terms so that it does converge as accurate as double precision will allow).

- The individual terms get bigger until

and then start getting smaller. I’ll ask my students why this happens, and I’ll eventually get an explanation like

and then start getting smaller. I’ll ask my students why this happens, and I’ll eventually get an explanation like

but

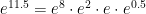

At this point, I’ll mention that calculators use some tricks to speed up convergence. For example, the calculator can simply store a few values of  in memory, like

in memory, like  ,

,  ,

,  ,

,  , and

, and  . I then ask my class how these could be used to find

. I then ask my class how these could be used to find  . After some thought, they will volunteer that

. After some thought, they will volunteer that

.

.

The first three values don’t need to be computed — they’ve already been stored in memory — while the last value can be computed via Taylor series. Also, since  , the series for

, the series for  will converge pretty quickly. (Some students may volunteer that the above product is logically equivalent to turning

will converge pretty quickly. (Some students may volunteer that the above product is logically equivalent to turning  into binary.)

into binary.)

At this point — after doing these explicit numerical examples — I’ll show graphs of  and graphs of the Taylor polynomials of

and graphs of the Taylor polynomials of  , observing that the polynomials get closer and closer to the graph of

, observing that the polynomials get closer and closer to the graph of  as more terms are added. (For example, see the graphs on the Wikipedia page for Taylor series, though I prefer to use Mathematica for in-class purposes.) In my opinion, the convergence of the graphs only becomes meaningful to students only after doing some numerical examples, as done above.

as more terms are added. (For example, see the graphs on the Wikipedia page for Taylor series, though I prefer to use Mathematica for in-class purposes.) In my opinion, the convergence of the graphs only becomes meaningful to students only after doing some numerical examples, as done above.

At this point, I hope my students are familiar with the definition of Taylor (Maclaurin) series, can apply the definition to  , and have some intuition meaning that the nasty Taylor series expression practically means add a bunch of terms together until you’re satisfied with the convergence.

, and have some intuition meaning that the nasty Taylor series expression practically means add a bunch of terms together until you’re satisfied with the convergence.

In the next post, we’ll consider another Taylor series which ought to be (but usually isn’t) really familiar to students: an infinite geometric series.

P.S. Here’s the Excel spreadsheet that I used to make the above figures: Taylor.

.

using the quadratic formula; more on that later.) To apply the method of successive approximation, we will rewrite this so that

appears on the left side and some function of

appears on the right side. I will choose

, or

.

.

into the right-hand side to get a new guess,

.

into the right-hand side to get a new guess,

.

. Then

into a calculator, then entering

, and then repeatedly hitting the

button.

, which we now find via the quadratic formula:

.

.

. Unfortunately, if we start with a guess near this root, like

, the sequence unexpectedly diverges from

but eventually converges to the positive root

: