Source: https://xkcd.com/2545/

Source: https://xkcd.com/2545/

The New York Times consistently provides the best coverage of mathematics and science by a traditional news outlet. Today, I’d like to feature their article The Odds, Updated Continually, which gives a nice synopsis of the growth of Bayesian statistics in recent years and how Bayesian statistics differs from the frequentist interpretation of statistics. For example:

Statistics may not sound like the most heroic of pursuits. But if not for statisticians, a Long Island fisherman might have died in the Atlantic Ocean after falling off his boat early one morning last summer.

The man owes his life to a once obscure field known as Bayesian statistics — a set of mathematical rules for using new data to continuously update beliefs or existing knowledge…

The essence of the frequentist technique is to apply probability to data. If you suspect your friend has a weighted coin, for example, and you observe that it came up heads nine times out of 10, a frequentist would calculate the probability of getting such a result with an unweighted coin. The answer (about 1 percent) is not a direct measure of the probability that the coin is weighted; it’s a measure of how improbable the nine-in-10 result is — a piece of information that can be useful in investigating your suspicion.

By contrast, Bayesian calculations go straight for the probability of the hypothesis, factoring in not just the data from the coin-toss experiment but any other relevant information — including whether you’ve previously seen your friend use a weighted coin.

Scientists who have learned Bayesian statistics often marvel that it propels them through a different kind of scientific reasoning than they’d experienced using classical methods.

“Statistics sounds like this dry, technical subject, but it draws on deep philosophical debates about the nature of reality,” said the Princeton University astrophysicist Edwin Turner, who has witnessed a widespread conversion to Bayesian thinking in his field over the last 15 years…

The Coast Guard has been using Bayesian analysis since the 1970s. The approach lends itself well to problems like searches, which involve a single incident and many different kinds of relevant data, said Lawrence Stone, a statistician for Metron, a scientific consulting firm in Reston, Va., that works with the Coast Guard.

At first, all the Coast Guard knew about the fisherman was that he fell off his boat sometime from 9 p.m. on July 24 to 6 the next morning. The sparse information went into a program called Sarops, for Search and Rescue Optimal Planning System. Over the next few hours, searchers added new information — on prevailing currents, places the search helicopters had already flown and some additional clues found by the boat’s captain.

The system couldn’t deduce exactly where Mr. Aldridge was drifting, but with more information, it continued to narrow down the most promising places to search.

Just before turning back to refuel, a searcher in a helicopter spotted a man clinging to two buoys he had tied together. He had been in the water for 12 hours; he was hypothermic and sunburned but alive.

Even in the jaded 21st century, it was considered something of a miracle.

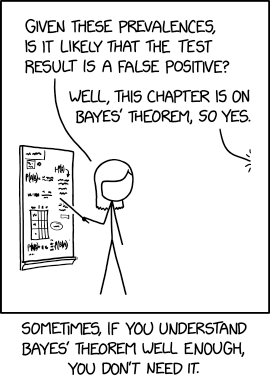

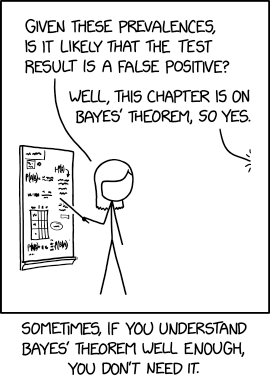

In 1978, Casscells et al1 published a small but important study showing that the majority of physicians, house officers, and students overestimated the positive predictive value (PPV) of a laboratory test result using prevalence and false positive rate. Today, interpretation of diagnostic tests is even more critical with the increasing use of medical technology in health care. Accordingly, we replicated the study by Casscells et al1 by asking a convenience sample of physicians, house officers, and students the same question: “If a test to detect a disease whose prevalence is 1/1000 has a false positive rate of 5%, what is the chance that a person found to have a positive result actually has the disease, assuming you know nothing about the person’s symptoms or signs?”

Approximately three-quarters of respondents answered the question incorrectly (95% CI, 65% to 87%). In our study, 14 of 61 respondents (23%) gave a correct response, not significantly different from the 11 of 60 correct responses (18%) in the Casscells study (difference, 5%; 95% CI, −11% to 21%). In both studies the most common answer was “95%,” given by 27 of 61 respondents (44%) in our study and 27 of 60 (45%) in the study by Casscells et al1 (Figure).