In a recent class with my future secondary math teachers, we had a fascinating discussion concerning how a teacher should respond to the following question from a student:

Is it ever possible to prove a statement or theorem by proving a special case of the statement or theorem?

Usually, the answer is no. In this series of posts, we’ve seen that a conjecture could be true for the first 40 cases or even the first  cases yet not always be true. We’ve also explored the computational evidence for various unsolved problems in mathematics, noting that even this very strong computational evidence, by itself, does not provide a proof for all possible cases.

cases yet not always be true. We’ve also explored the computational evidence for various unsolved problems in mathematics, noting that even this very strong computational evidence, by itself, does not provide a proof for all possible cases.

However, there are plenty of examples in mathematics where it is possible to prove a theorem by first proving a special case of the theorem. For the remainder of this series, I’d like to list, in no particular order, some common theorems used in secondary mathematics which are typically proved by first proving a special case.

The following problem appeared on a homework assignment of mine about 30 years ago when I was taking Honors Calculus out of Apostol’s book. I still remember trying to prove this theorem (at the time, very unsuccessfully) like it was yesterday.

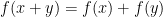

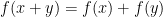

Theorem. If  is a continuous function so that

is a continuous function so that  , then

, then  for some constant

for some constant  .

.

Proof. The proof mirrors that of the uniqueness of the logarithm function, slowly proving special cases to eventually prove the theorem for all real numbers  .

.

Case 1.  . If we set

. If we set  and

and  , then

, then

Case 2.  . If

. If  is a positive integer, then

is a positive integer, then

.

.

(Technically, this should be proven by induction, but I’ll skip that for brevity.) If we let  , then

, then  .

.

Case 3.  . If

. If  is a negative integer, let

is a negative integer, let  , where

, where  is a positive integer. Then

is a positive integer. Then

Case 4.  . If

. If  is a rational number, then write

is a rational number, then write  , where

, where  and

and  are integers and

are integers and  is a positive integer. We’ll use the fact that

is a positive integer. We’ll use the fact that  , where the sum is repeated

, where the sum is repeated  times.

times.

Case 5.  . If

. If  is a real number, then let

is a real number, then let  be a sequence of rational numbers that converges to

be a sequence of rational numbers that converges to  , so that

, so that

Then, since  is continuous,

is continuous,

QED

Random Thought #1: The continuity of the function  was only used in Case 5 of the above proof. I’m nearly certain that there’s a pathological discontinuous function that satisfies

was only used in Case 5 of the above proof. I’m nearly certain that there’s a pathological discontinuous function that satisfies  which is not the function

which is not the function  . However, I don’t know what that function might be.

. However, I don’t know what that function might be.

Random Thought #2: For what it’s worth, this same idea can be used to solve the following problem that was posed during UNT’s Problem of the Month competition in January 2015. I won’t solve the problem here so that my readers can have the fun of trying to solve it for themselves.

Problem. Determine all nonnegative continuous functions that satisfy

.

.

So far in this series, I’ve shown that

So far in this series, I’ve shown that, so that

. Also, the endpoints change from

to

, so that