I had forgotten the precise assumptions on uniform convergence that guarantees that an infinite series can be differentiated term by term, so that one can safely conclude

.

This was part of my studies in real analysis as a student, so I remembered there was a theorem but I had forgotten the details.

So, like just about everyone else on the planet, I went to Google to refresh my memory even though I knew that searching for mathematical results on Google can be iffy at best.

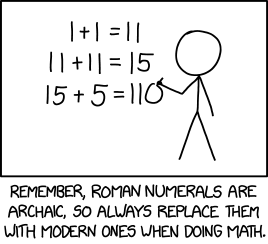

And I was not disappointed. Behold this laughably horrible false analogy (and even worse graphic) that I found on chegg.com:

Suppose Arti has to plan a birthday party and has lots of work to do like arranging stuff for decorations, planning venue for the party, arranging catering for the party, etc. All these tasks can not be done in one go and so need to be planned. Once the order of the tasks is decided, they are executed step by step so that all the arrangements are made in time and the party is a success.

Similarly, in Mathematics when a long expression needs to be differentiated or integrated, the calculation becomes cumbersome if the expression is considered as a whole but if it is broken down into small expressions, both differentiation and the integration become easy.

Pedagogically, I’m all for using whatever technique an instructor might deem necessary to to “sell” abstract mathematical concepts to students. Nevertheless, I’m pretty sure that this particular party-planning analogy has no potency for students who have progressed far enough to rigorously study infinite series.