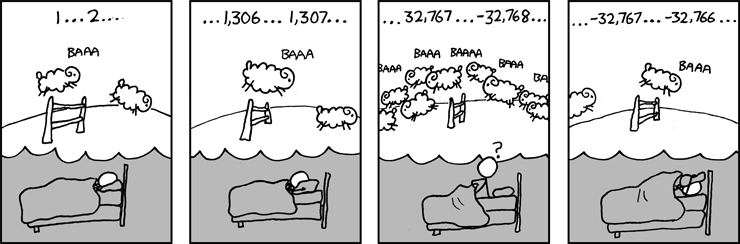

Source: http://www.xkcd.com/571/

This probably requires a little explanation with the nuances of how integers are stored in computers. A 16-bit signed integer simulates binary digits with 16 digits, where the first digit represents either positive () or negative (

). Because this first digit represents positive or negative, the counting system is a little different than regular counting in binary.

For starters,

represents

represents

represents

represents

For the next number, , there’s a catch. The first

means that this should represent a negative number. However, there’s no need for this to stand for

, since we already have a representation for

. So, to prevent representing the same number twice, we’ll say that this number represents

, and we’ll follow this rule for all representations starting with

. So

represents

represents

represents

represents

Because of this nuance, the following C computer program will result in the unexpected answer of (symbolized by the sheep going backwards in the comic strip).

main()

{

short x = 32767;

printf(“%d \n”, x + 1);

}

For more details, see http://en.wikipedia.org/wiki/Integer_%28computer_science%29