The following problem in differential equations has a very practical application for anyone who has either (1) taken out a loan to buy a house or a car or (2) is trying to pay off credit card debt. To my surprise, most math majors haven’t thought through the obvious applications of exponential functions as a means of engaging their future students, even though it is directly pertinent to their lives (both the students’ and the teachers’).

You have a balance of $2,000 on your credit card. Interest is compounded continuously with a relative rate of growth of 25% per year. If you pay the minimum amount of $50 per month (or $600 per year), how long will it take for the balance to be paid?

In yesterday’s post, I showed that the answer to this question was about 7.2 years. To obtain this answer, I started with the differential equation

which, given the initial condition , has solution

.

Today, I’ll give some pedagogical thoughts about how this problem, and other similar problems inspired by financial considerations, could fit into a Precalculus course… and hopefully improve the financial literacy of high school students.

I’ve read many Precalculus books; not many of them include applying exponential functions to the paying off of credit-card debt (or a mortgage on a house or car). Of course, yesterday’s derivation was well above the comprehension level of students in Precalculus. However, there’s no reason why Precalculus students couldn’t be given the general formula

,

where is the initial amount,

is the relative rate of growth, and

is the amount paid per year. In other words, students could be given the formula without the full explanation of where it comes from. After all, many Precalculus textbooks give the formula for Newton’s Law of Cooling (the subject of a future post) with neither derivation nor explanation (though its derivation is nearly identical to the work of yesterday’s post), So I don’t see why also giving students the above formula for paying off credit-card debt isn’t more common.

Plugging in ,

, and

into this equation again yields the function

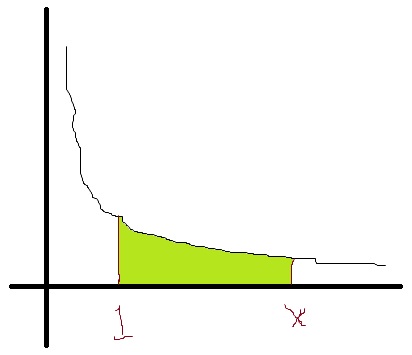

,

from which we find that it will take years to pay off the debt.

A natural follow-up question is “How much money actually was spent to pay off this debt?” By this point, the answer is quite easy: the lender paid per year for

years, and so the amount spent is

.

When I teach this topic in differential equations, I let that answer sink in for a while. The original debt was only \$2000, but ultimately \$4300 needs to be paid over 7.2 years in order to pay off the debt.

The natural question is, “Why did it take so long?” Of course, the answer is that the debtor only paid the minimal amount — $50 per month, or $600 per year. It stands to reason that if extra money was paid each month, then the debt will be paid off faster at lesser expense.

To give one example, let’s repeat the calculation if the debtor paid twice as much ($100 per month, or $1200 per year). Then the amount owed as a function of time would be

To find when the credit card will be paid off, we set :

That’s certainly a lot faster! Also, the amount that’s spent over that time is also considerably less:

.

So, along with being a good way to practice proficiency with exponential and logarithmic functions, this problem lends itself for students discovering some basic principles of financial literacy.